AI is everywhere—and now it’s in your report designer, too. With a quite simple AI$() designer function, you can embed the power of OpenAI or other LLMs directly into your List & Label reports. Sounds good? Let’s unpack how this works—and why it could become one of your favorite new features.

Check out the new AI$-Feature in our new YouTube-Video or the following text:

A small designer extension function for a giant leap

Traditionally, List & Label functions let you format strings, calculate numbers, or control layout. With the AI$() designer function, we’re venturing into a different territory: natural language processing. The idea is simple—pass a prompt to an AI model and use the result right in your report.

Whether you want a summary, a translation, or a more elegant wording, AI$() does the job. Under the hood, it connects to the OpenAI service (or any other supported LLM) using Microsoft’s Semantic Kernel SDK.

Let’s start with the core code.

How It Works Internally

Here’s the method that performs the actual AI call. The builder and kernel objects could be cached here, of course. All you need to do is add a reference to the Semantic Kernel package to your app and add a new designer function to your ListLabel instance.

private async Task<string> GetOpenAIResultAsync(string prompt)

{

var builder = Kernel.CreateBuilder();

var apiKey = "<your-secret-key-here>";

builder.AddOpenAIChatCompletion(

modelId: "o4-mini",

apiKey: apiKey

);

var kernel = builder.Build();

var chatFunction = kernel.CreateFunctionFromPrompt(prompt);

var result = await kernel.InvokeAsync(chatFunction);

return result.ToString();

}

Since the function is asynchronous but the evaluation of a report must happen synchronously (because the engine needs the full result before rendering), we need a pseudo-synchronous workaround:

private void aiFunction_EvaluateFunction(object sender, EvaluateFunctionEventArgs e)

{

e.ResultValue = Task.Run(() => GetOpenAIResultAsync(e.Parameter1.ToString())).Result;

e.ResultType = LlParamType.String;

}

This does the trick, but repeated calls with the same prompt would unnecessarily cost time and tokens. Let’s improve it.

Caching for Speed and Sanity

A simple in-memory cache using a Dictionary<string, string> improves performance significantly:

private Dictionary<string, string> _llmResults = new();

private void aiFunction_EvaluateFunction(object sender, EvaluateFunctionEventArgs e)

{

string prompt = e.Parameter1.ToString(); e.ResultType = LlParamType.String; if (_llmResults.TryGetValue(prompt, out var result)) { e.ResultValue = result; return; } string aiResult = Task.Run(() => GetOpenAIResultAsync(prompt)).Result;

_llmResults[prompt] = aiResult;

e.ResultValue = aiResult;

}

Why Not Async?

Report generation is a tightly timed process. Every function call must deliver a result now, not “sometime later”. Even if .NET allows async code, List & Label’s evaluation logic must be synchronous so it can determine things like cell height or page breaks in advance.

This makes the Task.Run(...).Result hack necessary, albeit slightly inelegant. It’s a price we pay for combining LLMs with a rendering engine designed for certainty.

What Can You Do with AI$()?

Let’s get practical. Here are some ideas:

- Translate product descriptions:

AI$("Translate 'Kaffeemaschine mit Thermoskanne' to English") - Summarize long texts:

AI$("Summarize this: ...") - Generate catchy titles:

AI$("Write a headline for this product: ...") - Rephrase content more politely:

AI$("Rephrase: 'Return at your own cost' in a friendlier tone") - Create code snippets from description:

AI$("Write a C# code snippet that calculates factorial")

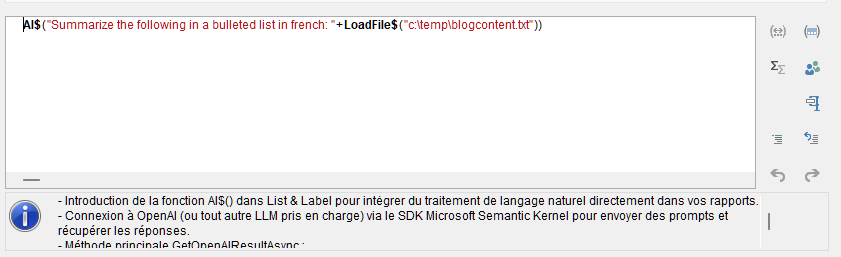

Use cases are only limited by your creativity—and OpenAI’s token limits. Here’s what happens if I summarize this blog post in French in the Designer:

Final Thoughts

Embedding AI in List & Label via the AI$() function opens new horizons for dynamic, intelligent reporting. With Semantic Kernel, we’ve got a powerful abstraction layer to easily adapt to new LLMs, models, or prompt templates. It’s your copilot in report design—just a function call away.

Give it a try. And let us know what prompt got you the most surprising or delightful result!